UPDATE: Newer results are now at the bottom of this article.

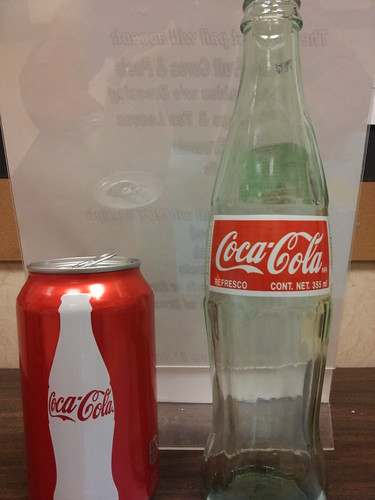

If you live in a big city, chances are that you've heard the hype about Mexican Coke, and seen it for sale in hip indie bakeries, food trucks, and coffee shops. In case you haven't, since Mexican Coke is made from "real sugar" some people go nuts for it, and extoll its virtues to anyone who will listen. However, I was somewhat suspicious the difference was so clear, since high fructose corn syrup is about 55% fructose and 42% glucose (very similar to the 50 / 50 split of table sugar aka sucrose), and the ingredients are otherwise identical. Other than the different type of sugar, the only other difference is that Mexican Coke has 85 mg sodium instead of 45 mg for American Coke.

I decided a good old double-blind taste test would be a good way to find out. If you're not familiar with "double-blind" studies, they ensure that the person running the experiment can't unconsciously bias the results. Why does that matter? The expectations of observers can lead to people believing that

horses can do math. As I was planning the test, I found one

double-blind test that found some people can tell the difference (but prefer American Coke), one

test that claimed to be blind (although the tasting comments make it clear it was not) which found Mexican Coke clearly superior, and plenty of non-blind taste tests where people

confirm their belief that Mexican Coke is way better.

I had several people tell me that there was a really noticeable difference in taste between the two kinds of Coke. Some people described American Coke as tasting "fake" or "chemically" or even having "an arsey aftertaste," while Mexican Coke tastes "more natural," or has "a much more complex flavor." To find out if that was true I wanted the test to have four samples: two Mexican Coke and two American Coke (see the note at the bottom as to why four samples instead of three). If the taste difference was clear, tasters should be able to correctly match the two pairs of identical samples. Ideally I would have used American coke in a glass bottle, but I couldn't find it so I ended up using one sample of canned American coke, and one sample of American coke in a plastic bottle. All Cokes were as fresh as possible, and kept at the same temperature in a mini fridge for several hours before the experiment.

For the experiment, I set up four identical cups for each taster, arranged in rows. I labeled each row with a letter (a through d), and poured in 1 oz of each Coke into the sample cups so that each taster had the same set of four samples. I then asked a colleague (who didn't know which kind of coke corresponded to which letter) to replace the letters with numbers with me out of the room (so I wouldn't know which kind of coke corresponded to each number either). We then brought in 7 more tasters (nine total), and gave each one a data sheet to indicate which samples they thought were identical, which one(s) were their favorite, and if they were a regular drinker of Coke (EDIT: I ran two follow-up tests later, see below for details).

The basic finding was that while many of the tasters

thought we tasted a difference, none of us correctly identified that the first two samples were the same (Mexican Coke), and the second two samples were the same (American Coke). Only one taster correctly identified either pair (she correctly

identified that the American Coke samples were the same, but thought that the two

Mexican Coke samples were different). Thus, I conclude that

Mexican Coke and American Coke do not have a strong enough difference in flavor to be readily detectable.

That doesn't mean that Mexican Coke isn't perceived as better with your eyes open; it may be all in your head, but if you enjoy Mexican Coke more that is real to you. After all, even when they know that they're taking a placebo,

patients may still show improved health relative to patients with no treatment.

Here is the raw data

for those interested (updated to include the 2nd and 3rd tests). Samples 1 and 2 both turned out to be Mexican

Coke, Sample 3 was the American Coke in a plastic bottle, Sample 4 was

the American Coke in a can.

Although we had a small sample size, with nine people including three regular Coke drinkers, if there was a clear taste difference someone should have been able to distinguish it. In fact, purely by guessing randomly there was a 75% chance that one or more person would correctly pair the samples, and a 49% chance that someone would correctly identify all samples. See the EDIT note below for the results of a second and third taste test!

I generally have a pretty good palate, but since I never drink soft drinks, I was pretty overwhelmed by how sweet and tart the Coke was. As such, asking me to distinguish between Cokes may be like asking a classical music critic to compare Pantera to Napalm Death (i.e. there may well be a difference, with the fault lying in the lack of context /training of the observer).

For a future test, it would be ideal to change the order of the tasting, as other research has found that the first sip is often perceived differently from further sips. I went back and forth several times between the samples, and the more I tasted the more they tasted identical to me (earlier I mistakenly grouped the four samples into mismatched pairs). I'd also like to do a taste test with plain sugar and plain HFCS dissolved in pure water, to see if people can tell the difference with all other factors absolutely identical.

Have you done your own taste test? If so, let me know about it in the comments!

EDIT: Since the industry standard for taste tests is a "triangle" test where two samples are identical and one is not, some people have asked why I went with four. There are two reasons. First, since I couldn't get American coke in glass bottles, I wanted to see if a plastic bottle vs a can made a difference. With only three samples, in theory every sample could have been slightly different, whereas with four I could be confident that if there was a noticeable difference, at least two of the samples should have been consistently paired (the two Mexican coke ones).

The second reason is to increase the confidence of the findings. With a triangle test, a taster has a 33% chance of randomly picking which of the three samples is different ("sample #2 is different"), and a 17% chance of correctly identifying all samples by chance (e.g. "#1 is American Coke, #2 is Mexican Coke, and #3 is American Coke"). While having two samples of each kind of Coke does

not affect these probabilities, by telling tasters that the experimental setup is

either two pairs of each sample,

or one sample of one and three samples of the other, their chance of correctly guessing goes down significantly (assuming that they don't know that I am actually providing two of each sample). This gives them a 14% chance of correctly identifying which samples are the same (e.g." #1 & #2 are the same, and #3 and #4 are the same, but I don't know which is American and which is Mexican") and a 7% chance of correctly identifying all samples.

I recently had a chance to repeat this test with four more tasters (this time I used American Coke in a plastic bottle and Mexican Coke in glass, but again used two samples of each). One of the tasters correctly paired the samples (although she thought the American Coke was Mexican and vice versa), however with 13 total tasters there is an 87% chance someone would have paired the samples correctly by chance. If you consider the second test as an independent event of four tasters, there is still a 46% chance that at least one taster would correctly distinguish all samples.

In order to be test if she could actually taste the difference, I set up another test. This time I went with 5 samples to increase my confidence in the results even further. I wanted to use two samples of one, and three of the other; if I used one and four and it happened that the first sample was the different one it could yield a false positive (since there is a bias for the first sample to taste different). With two and three, even if the taster correctly assumes that is the setup (I didn't tell her how many there were of each), there is still only a 10% chance of distinguishing all samples by chance, and a 5% chance of identifying them all by chance. Her chance of correctly distinguishing the samples on

both tests was only 1.4% (so if she was able to do so, we could be confident that she could really taste a difference). Of the 5 samples, she correctly identified only the last two, and incorrectly paired those samples with samples containing the other Coke product.

So in conclusion, less people correctly distinguished the samples than we would have expected by chance alone, and the one person who distinguished them once was unable to do so a second time. It is interesting to note that after the third test, several of us tested the two samples knowing what they were, and each of us th

ought that there was a clear difference in taste (despite our inability to tell that with our eyes closed). A final interesting test would be to give all tasters a sample of each correctly labeled to "calibrate" their palate. Then the double blind test could be administered to see if they do any better.

2nd EDIT: We finally had a test where the difference was detectable! However, the Mexican coke in this batch was noticeably flatter (less carbonated) which almost certainly had an impact. Even swirling the cups to get most small bubbles out, all 3 tasters tasted a difference. One got the first two samples right, but then said "once I had the American coke aftertaste in my mouth it all tasted the same." I noticed that the Mexican coke was noticeably saltier, which on the one had makes sense as the sodium content is almost double (85 mg vs 40 mg), but on the other hand that's a difference of 1/64 tsp of salt in a whole can, which is a truly miniscule amount per sample cup.